Why Small Language Models (SLMs) matter more than ever

AI has long been dominated by Large Language Models (LLMs)—those enormous, resource-hungry systems that power everything from chatbots to search engines. But let’s be honest: bigger isn’t always better. The AI landscape is shifting, and Small Language Models (SLMs) are leading the way. Why? Because they’re cheaper, faster, more sustainable, and more secure.

But here’s the problem: deploying an SLM is not plug-and-play. That’s where Visionarist changes the game. If you want to run your own AI—on your own infrastructure—without losing your mind over deployment headaches, keep reading.

The big problem with Large Language Models

💸 Cost and infrastructure nightmares

LLMs are expensive. Running GPT-4? Expect massive API costs or a supercomputer-level infrastructure. It’s like driving a Formula 1 car to pick up groceries—overkill.

🥷🏼 Privacy & security concerns

Most LLMs run on third-party servers, which means your sensitive data is being processed outside your control. If you’re in finance, healthcare, or law, that’s a nightmare waiting to happen.

🌱 The sustainability issue

Training an LLM can emit as much CO2 as five cars over their lifetime. Not exactly eco-friendly, right?Meanwhile, SLMs require a fraction of the energy, making them a smarter choice for businesses looking to reduce their carbon footprint.

SLMs: the smarter, lighter alternative

Small Language Models are exactly what they sound like: lighter, optimized AI models designed for specific tasks. Unlike their heavyweight cousins, they don’t require insane hardware or constant internet access. They can run on your own infrastructure, which means:

✔️ Lower costs – No need for insane cloud bills

✔️ Faster response times – Leaner models, instant results

✔️ More security & privacy – Keep your AI where you want it

✔️ Customizability – Train it for your specific business needs

With AI becoming an everyday necessity, SLMs are the obvious future. And the best part? Deploying one has never been easier—thanks to Visionarist.

Visionarist: the easiest way to deploy an SLM

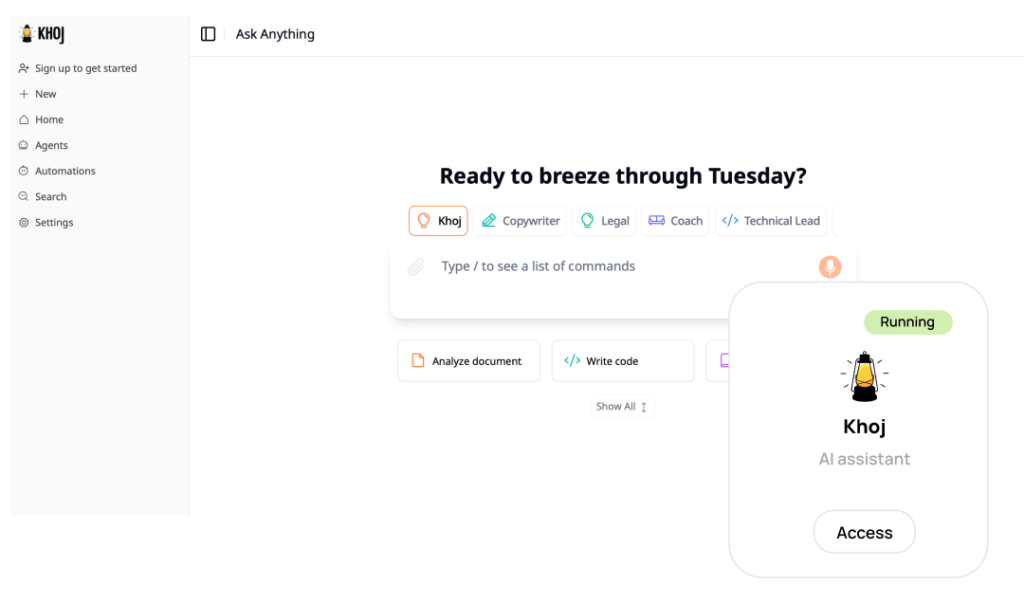

Here’s the good news: you don’t need to be a DevOps guru to deploy your own AI. Visionarist takes care of everything. Our platform lets you deploy Khoj, a powerful AI-powered search and knowledge assistant, and run your own SLM on top of it. No complex setup. No hidden fees. Just AI that works.

Here’s how it works:

1️⃣ Pick an SLM – Choose from models like Mistral 7B, TinyLlama, or Phi-2

2️⃣ Deploy Khoj with Visionarist – One click, and it’s live on your cloud

3️⃣ Integrate Your SLM – Upload your model, connect it to Khoj, and start using it

No need for complicated configurations, expensive infrastructure, or sleepless nights troubleshooting deployments. Visionarist does the heavy lifting.

The power of Khoj: your gateway to SLMs

Before you can harness the full potential of an SLM, you need a robust framework to integrate it into. That’s where Khoj comes in—a dynamic, open-source AI-powered search engine and personal knowledge assistant. Think of Khoj as the Swiss Army knife of information retrieval: it not only finds what you need but also learns from your interactions.

Why choose Khoj?

- Versatility: Khoj adapts to various use cases, from internal document search in enterprises to personalized digital assistants for busy professionals

- Simplicity: With a few clicks, you can deploy Khoj via Visionarist, making it the perfect launchpad for integrating your SLM

- Community and support: As an open-source tool, Khoj benefits from continuous improvements and community-driven enhancements, ensuring it remains cutting-edge

Integrating an SLM with Khoj can supercharge your AI applications, whether you’re looking to optimize customer service, enhance document retrieval, or even automate routine tasks.

Why businesses are moving to Visionarist

Companies are realizing that renting AI from a third party isn’t sustainable. With Visionarist, they’re taking control of their AI stack:

✔️ Legal firms are using SLMs with Khoj to summarize contracts instantly.

✔️ Tech companies have cut internal search times by 80%.

✔️ Healthcare providers are deploying AI assistants without privacy risks.

Visionarist isn’t just another AI tool. It’s a paradigm shift—one where companies own their AI, instead of renting it at a premium.